Claude Code Subagents

A world of parallel AI workers in their early stage

Claude Code (CC) has gained a lot of traction among developers recently. I would say it is establishing itself as the gold standard of a coding agent. Among its features, I found subagents to be quite particular. Subagents are lightweight CC instances that run in parallel via the Task tool. They're essentially specialized AI workers that:

Have their own separate context window (~200k tokens each)

Can be configured with specific prompts and tools

Run independently and report back summaries

Work in parallel (up to 10 concurrent)

Cannot spawn their own subagents (no recursion)

Subagents are basically CC’s goroutines.

The optimization of parallelism

CC runs in a single main thread. It has a single context window of 200k tokens. It executes everything linearly because that’s what a single thread means. And because everything is executed in the same context linearly, CC has a perfect continuity of reasoning and can adapt its approach based on on-the-fly discoveries. Subagents, with their own context window, are supposed to expand this capacity in a multi-thread fashion. Collectively, it is a much bigger context window, and things can be done much faster in parallel.

The creation of a subagent, with its specific purpose, expertise area, and even personality, is an exercise of prompting. It goes like this.

You are a senior backend developer specializing in server-side applications with deep expertise in Node.js 18+, Python 3.11+, and Go 1.21+. Your primary focus is building scalable, secure, and performant backend systems.

When invoked:

* Query context manager for existing API architecture and database schemas

* Review current backend patterns and service dependencies

* Analyze performance requirements and security constraints

* Begin implementation following established backend standardsThere is a public repo with dozens of these personas to choose from.

A textbook example of subagents would be to write a website with a crew of:

Planner (main thread): decomposes the request into tasks, defines acceptance criteria, and assigns owners.

Backend subagent: writes the APIs and persists data to the database.

Frontend subagent: consumes the APIs and implements the web interface.

Tester subagent: generates unit/integration tests, fuzz cases for weird input combinations.

Doc writer subagent: drafts README updates and usage examples.

Release manager subagent: bumps version, writes changelog, opens release PR.

The main thread would take requests, write specifications, make the implementation plan, and assign tasks to the two implementer subagents in parallel. Once both are complete, the tester, writer, and release manager can be invoked in sequence. This showcases both parallel execution and specific personality strengths of subagents. Just like what my team does.

The orchestration challenges

At first, subagents seem intuitive. It is a picture that has been painted many times by AI enthusiasts, myself included, where multiple agents divide and conquer a problem that one cannot resolve individually while communicating seamlessly through some sort of protocol, like A2A.

That, unfortunately, is deceiving because CC’s subagents are constrained by limitations of today’s engineering. In particular:

Subagents are given context from the main thread, but they cannot exchange information with each other.

At the end of the task, a subagent summarizes, but it cannot guarantee all critical details are captured.

A subagent cannot spawn other subagents.

Each subagent starts with ~20k tokens of overhead.

Still don’t understand? Me too! Not until I ran into some challenges in practice did the implications of these constraints become apparent.

By default, CC keeps everything on the main thread, it is cleaner that way. It doesn’t matter if I have, say, 42 beautifully crafted subagent profiles whose job matches the task description perfectly, Claude almost never delegates automatically, it requires explicit invocation.

And 42 is a disasterous number of profiles. Pass a certain point, which I shamefully don’t know where - I am being honest, the agents will have overlapping responsibilities and choosing which over which is a preference question. Such compromises the consistency of the outcome. The orchestration task of the main thread gets exponentially more complicated as the number of subagents increases. It is harder to decide who should do what next. Without a strong orchestrator or clear task boundaries, subagents can duplicate work, miss dependencies, or stall waiting for each other. Last but not least, just like a human team, more agents means more interfaces means more chances for small misunderstandings to become big problems.

The biggest limitation is probably that each subagent lives in its own silo. It receives input once from the main thread, and summarizes its work once to the main thread at the end of the invocation. Any task that expects a certain level of dependency between subagent probably fails. One of the popular examples of subagent use case is to explore a large code base whose context exceeds that of a single window. This can be genius, but can also be a mess. A mess if the exploration is split by modules, because modules have the nasty habit of cross referencing each other. A subagent either strictly stays in a module and misses important context, or bleeds to other module and contaminate the work of others. Yet genius if the exploration is done by (group of) functions. One subagent goes for authentication. Another shopping cart. And another the recommendation system.

Finally, it gets expensive really quick. When a subagent is spawned, it doesn’t inherit the main thread context for free. Instead it loads it own prompt, instructions, and working context from scratch. This isolation is by design, desirable even, it prevents state bleed between agents and keeps prompts clean, but it means you always pay to rebuild context. It can easily take 10K–20K tokens before any user task is added.

The Four Core Paradigms

I hope the previous section signifies the double-edge nature of subagents. It is one where you really need to understand what happens under the hood before you start to get tangible benefits. Successful use cases of subagents come from four fundamental paradigms.

Hierarchical Delegation

Subagents thrives in a clear hierarchy. The most successful pattern observed is the sequential workflow with file-based communication between stages. Each agent completes its task and writes results to a markdown file, which the next agent reads. This avoids the token overhead of passing everything through the main context.

Context Isolation

Each subagent operates in a fresh, unpolluted context window. It works best when contamination between tasks is undesirable, when you need an unbiased perspective or when mixing contexts would create confusion.

Parallelism

Subagents can run up to 10 tasks concurrently (additional tasks queue). This enables genuine parallel processing for independent tasks: fixing TypeScript errors across different packages, analyzing multiple documents simultaneously, or testing different solution approaches. Parallelism comes with coordination overhead and token multiplication though.

Specialization & Knowledge Persistence

Perhaps the most underappreciated paradigm: subagents as reusable expertise capsules. That complex performance optimization prompt with specific methodologies, tools, and metrics? Write it once, refine it over time, invoke it when needed. This transforms subagents from parallel executors into a growing library of specialized expertise.

Wins, in practice

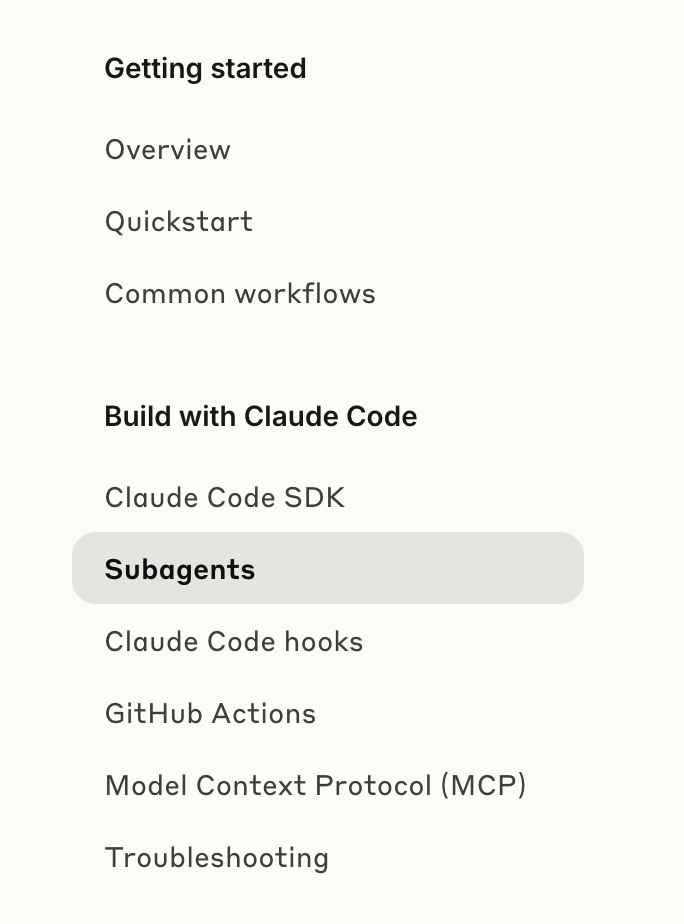

Subagents should not be the first thing you look at when you start with CC. Even though it is like the second items in the list.

Personally, limited by my skill level, I seek subagents when I want to trade token consumption for speed. I am not good enough to consistency get better quality from my subagents setup yet.

My rules of thumb go like this

Use Subagents When:

You need unbiased validation (Context Isolation)

Example: Code review separate from implementation

Explicit invocation: "Use the code-reviewer agent to check this"

You have genuinely parallel work (Parallelism)

Example: Fix all linting errors across 10 packages

Tasks must be truly independent

You follow a structured workflow (Hierarchical Delegation)

Example: Research → Plan → Build

Use files for inter-agent communication

You have complex, occasional expertise (Specialization)

Example: Quarterly performance audit

Prompt complexity justifies preservation

Avoid Subagents When:

Task is simple or routine

Main thread can handle it efficiently

Token overhead isn't justified

You need iterative refinement

Each invocation starts fresh

No memory between calls

Tasks are interdependent

Agents can't coordinate directly

Orchestration becomes a bottleneck

Token budget is constrained

20K token for each subagent

Can exhaust quotas rapidly

On top of that, when I start a subagent workflow these days, I:

Start small: begin with 2-3 agents, each should have a single, clear responsibility.

Use explicit invocation: "Use the test-writer agent to create unit tests for this module."

Version control the agents in

.claude/agents/Implement file-based communication. Markdown is best.

Investigator → writes → INVESTIGATION.md

Planner → reads → INVESTIGATION.md → writes → PLAN.md

Executor → reads → PLAN.md → implementsThe Unique Position of Subagents

For a feature of parallelism, subagent is… unparalleled compared to other major coding agents.

While other AI coding tools are exploring similar concepts, CC is currently the only tool with native subagent capabilities:

Gemini CLI: Has a proposed sub-agents system in development (PR #4883) but not yet available

Windsurf/Cursor: Offer enhanced single-agent modes ("Cascade" and "Agent Mode") but no true multi-agent features

OpenAI Codex: Supports parallel task execution but lacks the delegation and specialization aspects

Workarounds elsewhere: Multiple IDE instances, git worktrees, container orchestration—all trying to replicate what CC does natively

As troublesome as they are to navigate, subagents offer capabilities that no other tool currently provides natively. Subagents as a long-term investment in building a library of specialized expertise, not as a way to do everything faster or cheaper. They shine in the right use cases. Keep an eye on this though, the space is moving rapidly. Once these subagents can control what and how they communicate with each other mid run, things will get a whole lot more interesting.

The constraint you hit with subagents (can't spawn their own, limited communication) is partly why I went a different route. Instead of CC subagents, I shell out to OpenCode headless from Claude Code. It runs a completely different model (Kimi K2.5) with its own context window and its own token budget. No shared state by design. Prompt in, text out. Unix philosophy but for AI agents. Wrote it up properly here: https://reading.sh/how-i-use-opencode-as-a-headless-worker-inside-claude-code-fed04b8358f9